image naming conventions"Using clear, descriptive file names for images makes it easier for search engines to understand the image content. Naming conventions that include keywords help improve search visibility and attract more traffic to your site."

image optimization"Image optimization involves reducing file sizes, using descriptive file names, and adding alt text to improve search visibility. Best SEO Agency Sydney Australia.

image optimization"Image optimization involves using descriptive file names, adding alt text, and reducing file sizes to enhance page load speed and accessibility. Optimized images improve user experience, boost page performance, and can contribute to better search engine rankings."

Best SEO Sydney Agency.image optimization"Image optimization involves reducing file sizes, using appropriate formats, and adding descriptive metadata to improve website load times and search visibility. By optimizing images, you enhance user experience, lower bounce rates, and boost your sites overall performance."

image optimization"Image optimization involves reducing file sizes, adding alt text, and using descriptive filenames. Optimized images load faster, improve accessibility, and contribute to a better user experience, which can enhance SEO performance."

image optimization analytics"Image optimization analytics track file sizes, load times, and user engagement metrics. Best Search Engine Optimisation Services. By reviewing these analytics, you can identify areas for improvement, refine your approach, and ensure a better-performing website."

image optimization benchmarks"Image optimization benchmarks provide performance standards to measure how well images load, render, and enhance user experience. Comparing your sites performance to industry benchmarks helps you identify improvement areas and achieve optimal results."

image optimization best practices"Image optimization best practices include compressing files, using descriptive alt text, and ensuring responsive display. Following these practices leads to better load times, improved accessibility, and increased search engine visibility."

image optimization for WordPress"Optimizing images in WordPress involves using plugins and settings that compress files, add alt text, and ensure responsive display. By following best practices for WordPress, you enhance site speed and improve SEO performance."

Best Local SEO Sydney.

image optimization guides"Image optimization guides offer step-by-step instructions for improving file sizes, dimensions, and metadata. These guides help ensure that your images are fully optimized, resulting in faster load times and better search rankings."

image optimization metrics"Tracking image optimization metricssuch as load speed, file size, and engagement rateshelps evaluate performance and identify areas for improvement. By monitoring these metrics, you ensure that your images contribute to a fast, user-friendly site."

image optimization plugins"Image optimization plugins automate the process of compressing, resizing, and optimizing images for websites. These plugins save time, improve load speeds, and maintain high-quality visuals without manual intervention."

SEO Services .image optimization strategies"Image optimization strategies outline best practices for reducing file sizes, enhancing quality, and improving metadata. Following these strategies results in faster load times, improved user experience, and better search rankings."

image optimization testing tools"Image optimization testing tools measure file sizes, load times, and display quality across devices. Using these tools helps identify areas for improvement, ensuring that your images perform well and enhance overall site performance."

image optimization toolsUsing image optimization toolssuch as compression software and format convertersstreamlines the process of reducing file sizes and improving quality.

image optimization tutorials"Tutorials provide step-by-step guidance for compressing, resizing, and enhancing images. Following these tutorials ensures that your images are fully optimized, resulting in faster load times and better search rankings."

image optimization workflow"Establishing a clear image optimization workflow streamlines the process of compressing, resizing, and adding metadata. A well-defined workflow helps maintain consistency, improves efficiency, and ensures better image performance."

image optimization workflow automation"Workflow automation streamlines image compression, resizing, and metadata updates. By automating these tasks, you save time, maintain consistent quality, and ensure that your images remain optimized at all times."

image performance benchmarks"Establishing performance benchmarks for images helps measure how well they load and render on various devices. Benchmarks provide a reference point for identifying issues, refining optimization efforts, and improving overall site performance."

image performance monitoring"Image performance monitoring tracks how well images load and render on different devices. By analyzing performance data, you can identify bottlenecks, improve load speeds, and ensure a smooth user experience."

image quality settings"Adjusting image quality settings allows you to balance clarity and file size. By optimizing these settings, you maintain a visually appealing site while improving load times and enhancing overall performance."

|

|

|

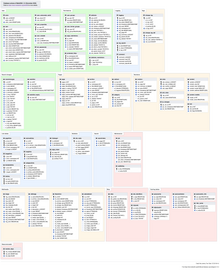

Screenshot

The Main Page of the English Wikipedia running an alpha version of MediaWiki 1.40

|

|

| Original author(s) | |

|---|---|

| Developer(s) | Wikimedia Foundation |

| Initial release | January 25, 2002 |

| Stable release |

1.43.0[1]

|

| Repository | |

| Written in | PHP[2] |

| Operating system | Windows, macOS, Linux, FreeBSD, OpenBSD, Solaris |

| Size | 79.05 MiB (compressed) |

| Available in | 459[3] languages |

| Type | Wiki software |

| License | GPLv2+[4] |

| Website | mediawiki |

MediaWiki is free and open-source wiki software originally developed by Magnus Manske for use on Wikipedia on January 25, 2002, and further improved by Lee Daniel Crocker,[5][6] after which development has been coordinated by the Wikimedia Foundation. It powers several wiki hosting websites across the Internet, as well as most websites hosted by the Wikimedia Foundation including Wikipedia, Wiktionary, Wikimedia Commons, Wikiquote, Meta-Wiki and Wikidata, which define a large part of the set requirements for the software.[7] Besides its usage on Wikimedia sites, MediaWiki has been used as a knowledge management and content management system on websites such as Fandom, wikiHow and major internal installations like Intellipedia and Diplopedia.

MediaWiki is written in the PHP programming language and stores all text content into a database. The software is optimized to efficiently handle large projects, which can have terabytes of content and hundreds of thousands of views per second.[7][8] Because Wikipedia is one of the world's largest and most visited websites, achieving scalability through multiple layers of caching and database replication has been a major concern for developers. Another major aspect of MediaWiki is its internationalization; its interface is available in more than 400 languages.[9] The software has hundreds of configuration settings[10] and more than 1,000 extensions available for enabling various features to be added or changed.[11]

MediaWiki provides a rich core feature set and a mechanism to attach extensions to provide additional functionality.

Due to the strong emphasis on multilingualism in the Wikimedia projects, internationalization and localization has received significant attention by developers. The user interface has been fully or partially translated into more than 400 languages on translatewiki.net,[9] and can be further customized by site administrators (the entire interface is editable through the wiki).

Several extensions, most notably those collected in the MediaWiki Language Extension Bundle, are designed to further enhance the multilingualism and internationalization of MediaWiki.

Installation of MediaWiki requires that the user have administrative privileges on a server running both PHP and a compatible type of SQL database. Some users find that setting up a virtual host is helpful if the majority of one's site runs under a framework (such as Zope or Ruby on Rails) that is largely incompatible with MediaWiki.[12] Cloud hosting can eliminate the need to deploy a new server.[13]

An installation PHP script is accessed via a web browser to initialize the wiki's settings. It prompts the user for a minimal set of required parameters, leaving further changes, such as enabling uploads,[14] adding a site logo,[15] and installing extensions, to be made by modifying configuration settings contained in a file called LocalSettings.php.[16] Some aspects of MediaWiki can be configured through special pages or by editing certain pages; for instance, abuse filters can be configured through a special page,[17] and certain gadgets can be added by creating JavaScript pages in the MediaWiki namespace.[18] The MediaWiki community publishes a comprehensive installation guide.[19]

One of the earliest differences between MediaWiki (and its predecessor, UseModWiki) and other wiki engines was the use of "free links" instead of CamelCase. When MediaWiki was created, it was typical for wikis to require text like "WorldWideWeb" to create a link to a page about the World Wide Web; links in MediaWiki, on the other hand, are created by surrounding words with double square brackets, and any spaces between them are left intact, e.g. [[World Wide Web]]. This change was logical for the purpose of creating an encyclopedia, where accuracy in titles is important.

MediaWiki uses an extensible[20] lightweight wiki markup designed to be easier to use and learn than HTML. Tools exist for converting content such as tables between MediaWiki markup and HTML.[21] Efforts have been made to create a MediaWiki markup spec, but a consensus seems to have been reached that Wikicode requires context-sensitive grammar rules.[22][23] The following side-by-side comparison illustrates the differences between wiki markup and HTML:

| MediaWiki syntax (the "behind the scenes" code used to add formatting to text) |

HTML equivalent (another type of "behind the scenes" code used to add formatting to text) |

Rendered output (seen onscreen by a site viewer) |

|---|---|---|

====A dialogue====

"Take some more [[tea]]," the March Hare said to Alice, very earnestly.

"I've had nothing yet," Alice replied in an offended tone: "so I can't take more."

"You mean you can't take ''less''," said the Hatter: "it's '''very''' easy to take ''more'' than nothing."

|

<h4>A dialogue</h4>

<p>"Take some more <a href="/wiki/Tea" title="Tea">tea</a>," the March Hare said to Alice, very earnestly.</p> <br>

<p>"I've had nothing yet," Alice replied in an offended tone: "so I can't take more."</p> <br>

<p>"You mean you can't take <i>less</i>," said the Hatter: "it's <b>very</b> easy to take <i>more</i> than nothing."</p>

|

A dialogue

"Take some more tea," the March Hare said to Alice, very earnestly. "I've had nothing yet," Alice replied in an offended tone: "so I can't take more." "You mean you can't take less," said the Hatter: "it's very easy to take more than nothing." |

(Quotation above from Alice's Adventures in Wonderland by Lewis Carroll)

MediaWiki's default page-editing tools have been described as somewhat challenging to learn.[24] A survey of students assigned to use a MediaWiki-based wiki found that when they were asked an open question about main problems with the wiki, 24% cited technical problems with formatting, e.g. "Couldn't figure out how to get an image in. Can't figure out how to show a link with words; it inserts a number."[25]

To make editing long pages easier, MediaWiki allows the editing of a subsection of a page (as identified by its header). A registered user can also indicate whether or not an edit is minor. Correcting spelling, grammar or punctuation are examples of minor edits, whereas adding paragraphs of new text is an example of a non-minor edit.

Sometimes while one user is editing, a second user saves an edit to the same part of the page. Then, when the first user attempts to save the page, an edit conflict occurs. The second user is then given an opportunity to merge their content into the page as it now exists following the first user's page save.

MediaWiki's user interface has been localized in many different languages. A language for the wiki content itself can also be set, to be sent in the "Content-Language" HTTP header and "lang" HTML attribute.

VisualEditor has its own integrated wikitext editing interface known as 2017 wikitext editor, the older editing interface is known as 2010 wikitext editor.

MediaWiki has an extensible web API (application programming interface) that provides direct, high-level access to the data contained in the MediaWiki databases. Client programs can use the API to log in, get data, and post changes. The API supports thin web-based JavaScript clients and end-user applications (such as vandal-fighting tools). The API can be accessed by the backend of another web site.[26] An extensive Python bot library, Pywikibot,[27] and a popular semi-automated tool called AutoWikiBrowser, also interface with the API.[28] The API is accessed via URLs such as https://en.wikipedia.org/w/api.php?action=query&list=recentchanges. In this case, the query would be asking Wikipedia for information relating to the last 10 edits to the site. One of the perceived advantages of the API is its language independence; it listens for HTTP connections from clients and can send a response in a variety of formats, such as XML, serialized PHP, or JSON.[29] Client code has been developed to provide layers of abstraction to the API.[30]

Among the features of MediaWiki to assist in tracking edits is a Recent Changes feature that provides a list of recent edits to the wiki. This list contains basic information about those edits such as the editing user, the edit summary, the page edited, as well as any tags (e.g. "possible vandalism")[31] added by customizable abuse filters and other extensions to aid in combating unhelpful edits.[32] On more active wikis, so many edits occur that it is hard to track Recent Changes manually. Anti-vandal software, including user-assisted tools,[33] is sometimes employed on such wikis to process Recent Changes items. Server load can be reduced by sending a continuous feed of Recent Changes to an IRC channel that these tools can monitor, eliminating their need to send requests for a refreshed Recent Changes feed to the API.[34][35]

Another important tool is watchlisting. Each logged-in user has a watchlist to which the user can add whatever pages he or she wishes. When an edit is made to one of those pages, a summary of that edit appears on the watchlist the next time it is refreshed.[36] As with the recent changes page, recent edits that appear on the watchlist contain clickable links for easy review of the article history and specific changes made.

There is also the capability to review all edits made by any particular user. In this way, if an edit is identified as problematic, it is possible to check the user's other edits for issues.

MediaWiki allows one to link to specific versions of articles. This has been useful to the scientific community, in that expert peer reviewers could analyse articles, improve them and provide links to the trusted version of that article.[37]

Navigation through the wiki is largely through internal wikilinks. MediaWiki's wikilinks implement page existence detection, in which a link is colored blue if the target page exists on the local wiki and red if it does not. If a user clicks on a red link, they are prompted to create an article with that title. Page existence detection makes it practical for users to create "wikified" articles—that is, articles containing links to other pertinent subjects—without those other articles being yet in existence.

Interwiki links function much the same way as namespaces. A set of interwiki prefixes can be configured to cause, for instance, a page title of wikiquote:Jimbo Wales to direct the user to the Jimbo Wales article on Wikiquote.[38] Unlike internal wikilinks, interwiki links lack page existence detection functionality, and accordingly there is no way to tell whether a blue interwiki link is broken or not.

Interlanguage links are the small navigation links that show up in the sidebar in most MediaWiki skins that connect an article with related articles in other languages within the same Wiki family. This can provide language-specific communities connected by a larger context, with all wikis on the same server or each on its own server.[39]

Previously, Wikipedia used interlanguage links to link an article to other articles on the same topic in other editions of Wikipedia. This was superseded by the launch of Wikidata.[40]

Page tabs are displayed at the top of pages. These tabs allow users to perform actions or view pages that are related to the current page. The available default actions include viewing, editing, and discussing the current page. The specific tabs displayed depend on whether the user is logged into the wiki and whether the user has sysop privileges on the wiki. For instance, the ability to move a page or add it to one's watchlist is usually restricted to logged-in users. The site administrator can add or remove tabs by using JavaScript or installing extensions.[41]

Each page has an associated history page from which the user can access every version of the page that has ever existed and generate diffs between two versions of his choice. Users' contributions are displayed not only here, but also via a "user contributions" option on a sidebar. In a 2004 article, Carl Challborn and Teresa Reimann noted that "While this feature may be a slight deviation from the collaborative, 'ego-less' spirit of wiki purists, it can be very useful for educators who need to assess the contribution and participation of individual student users."[42]

MediaWiki provides many features beyond hyperlinks for structuring content. One of the earliest such features is namespaces. One of Wikipedia's earliest problems had been the separation of encyclopedic content from pages pertaining to maintenance and communal discussion, as well as personal pages about encyclopedia editors. Namespaces are prefixes before a page title (such as "User:" or "Talk:") that serve as descriptors for the page's purpose and allow multiple pages with different functions to exist under the same title. For instance, a page titled "[[The Terminator]]", in the default namespace, could describe the 1984 movie starring Arnold Schwarzenegger, while a page titled "[[User:The Terminator]]" could be a profile describing a user who chooses this name as a pseudonym. More commonly, each namespace has an associated "Talk:" namespace, which can be used to discuss its contents, such as "User talk:" or "Template talk:". The purpose of having discussion pages is to allow content to be separated from discussion surrounding the content.[43][44]

Namespaces can be viewed as folders that separate different basic types of information or functionality. Custom namespaces can be added by the site administrators. There are 16 namespaces by default for content, with 2 "pseudo-namespaces" used for dynamically generated "Special:" pages and links to media files. Each namespace on MediaWiki is numbered: content page namespaces have even numbers and their associated talk page namespaces have odd numbers.[45]

Users can create new categories and add pages and files to those categories by appending one or more category tags to the content text. Adding these tags creates links at the bottom of the page that take the reader to the list of all pages in that category, making it easy to browse related articles.[46] The use of categorization to organize content has been described as a combination of:

In addition to namespaces, content can be ordered using subpages. This simple feature provides automatic breadcrumbs of the pattern [[Page title/Subpage title]] from the page after the slash (in this case, "Subpage title") to the page before the slash (in this case, "Page title").

If the feature is enabled, users can customize their stylesheets and configure client-side JavaScript to be executed with every pageview. On Wikipedia, this has led to a large number of additional tools and helpers developed through the wiki and shared among users. For instance, navigation popups is a custom JavaScript tool that shows previews of articles when the user hovers over links and also provides shortcuts for common maintenance tasks.[48]

The entire MediaWiki user interface can be edited through the wiki itself by users with the necessary permissions (typically called "administrators"). This is done through a special namespace with the prefix "MediaWiki:", where each page title identifies a particular user interface message. Using an extension,[49] it is also possible for a user to create personal scripts, and to choose whether certain sitewide scripts should apply to them by toggling the appropriate options in the user preferences page.

The "MediaWiki:" namespace was originally also used for creating custom text blocks that could then be dynamically loaded into other pages using a special syntax. This content was later moved into its own namespace, "Template:".

Templates are text blocks that can be dynamically loaded inside another page whenever that page is requested. The template is a special link in double curly brackets (for example "date=October 2018"), which calls the template (in this case located at Template:Disputed) to load in place of the template call.

Templates are structured documents containing attribute–value pairs. They are defined with parameters, to which are assigned values when transcluded on an article page. The name of the parameter is delimited from the value by an equals sign. A class of templates known as infoboxes is used on Wikipedia to collect and present a subset of information about its subject, usually on the top (mobile view) or top right-hand corner (desktop view) of the document.

Pages in other namespaces can also be transcluded as templates. In particular, a page in the main namespace can be transcluded by prefixing its title with a colon; for example, :MediaWiki transcludes the article "MediaWiki" from the main namespace. Also, it is possible to mark the portions of a page that should be transcluded in several ways, the most basic of which are:[50]

<noinclude>...</noinclude>, which marks content that is not to be transcluded;<includeonly>...</includeonly>, which marks content that is not rendered unless it is transcluded;<onlyinclude>...</onlyinclude>, which marks content that is to be the only content transcluded.A related method, called template substitution (called by adding subst: at the beginning of a template link) inserts the contents of the template into the target page (like a copy and paste operation), instead of loading the template contents dynamically whenever the page is loaded. This can lead to inconsistency when using templates, but may be useful in certain cases, and in most cases requires fewer server resources (the actual amount of savings can vary depending on wiki configuration and the complexity of the template).

Templates have found many different uses. Templates enable users to create complex table layouts that are used consistently across multiple pages, and where only the content of the tables gets inserted using template parameters. Templates are frequently used to identify problems with a Wikipedia article by putting a template in the article. This template then outputs a graphical box stating that the article content is disputed or in need of some other attention, and also categorize it so that articles of this nature can be located. Templates are also used on user pages to send users standard messages welcoming them to the site,[51] giving them awards for outstanding contributions,[52][53] warning them when their behavior is considered inappropriate,[54] notifying them when they are blocked from editing,[55] and so on.

MediaWiki offers flexibility in creating and defining user groups. For instance, it would be possible to create an arbitrary "ninja" group that can block users and delete pages, and whose edits are hidden by default in the recent changes log. It is also possible to set up a group of "autoconfirmed" users that one becomes a member of after making a certain number of edits and waiting a certain number of days.[56] Some groups that are enabled by default are bureaucrats and sysops. Bureaucrats have the power to change other users' rights. Sysops have power over page protection and deletion and the blocking of users from editing. MediaWiki's available controls on editing rights have been deemed sufficient for publishing and maintaining important documents such as a manual of standard operating procedures in a hospital.[57]

MediaWiki comes with a basic set of features related to restricting access, but its original and ongoing design is driven by functions that largely relate to content, not content segregation. As a result, with minimal exceptions (related to specific tools and their related "Special" pages), page access control has never been a high priority in core development and developers have stated that users requiring secure user access and authorization controls should not rely on MediaWiki, since it was never designed for these kinds of situations. For instance, it is extremely difficult to create a wiki where only certain users can read and access some pages.[58] Here, wiki engines like Foswiki, MoinMoin and Confluence provide more flexibility by supporting advanced security mechanisms like access control lists.

The MediaWiki codebase contains various hooks using callback functions to add additional PHP code in an extensible way. This allows developers to write extensions without necessarily needing to modify the core or having to submit their code for review. Installing an extension typically consists of adding a line to the configuration file, though in some cases additional changes such as database updates or core patches are required.

Five main extension points were created to allow developers to add features and functionalities to MediaWiki. Hooks are run every time a certain event happens; for instance, the ArticleSaveComplete hook occurs after a save article request has been processed.[59] This can be used, for example, by an extension that notifies selected users whenever a page edit occurs on the wiki from new or anonymous users.[60] New tags can be created to process data with opening and closing tags (<newtag>...</newtag>).[61] Parser functions can be used to create a new command (...).[62] New special pages can be created to perform a specific function. These pages are dynamically generated. For example, a special page might show all pages that have one or more links to an external site or it might create a form providing user submitted feedback.[63] Skins allow users to customize the look and feel of MediaWiki.[64] A minor extension point allows the use of Amazon S3 to host image files.[65]

Among the most popular extensions is a parser function extension, ParserFunctions, which allows different content to be rendered based on the result of conditional statements.[66] These conditional statements can perform functions such as evaluating whether a parameter is empty, comparing strings, evaluating mathematical expressions, and returning one of two values depending on whether a page exists. It was designed as a replacement for a notoriously inefficient template called Qif.[67] Schindler recounts the history of the ParserFunctions extension as follows:[68]

In 2006 some Wikipedians discovered that through an intricate and complicated interplay of templating features and CSS they could create conditional wiki text, i.e. text that was displayed if a template parameter had a specific value. This included repeated calls of templates within templates, which bogged down the performance of the whole system. The developers faced the choice of either disallowing the spreading of an obviously desired feature by detecting such usage and explicitly disallowing it within the software or offering an efficient alternative. The latter was done by Tim Starling, who announced the introduction of parser functions, wiki text that calls functions implemented in the underlying software. At first, only conditional text and the computation of simple mathematical expressions were implemented, but this already increased the possibilities for wiki editors enormously. With time further parser functions were introduced, finally leading to a framework that allowed the simple writing of extension functions to add arbitrary functionalities, like e.g. geo-coding services or widgets. This time the developers were clearly reacting to the demand of the community, being forced either to fight the solution of the issue that the community had (i.e. conditional text), or offer an improved technical implementation to replace the previous practice and achieve an overall better performance.

Another parser functions extension, StringFunctions, was developed to allow evaluation of string length, string position, and so on. Wikimedia communities, having created awkward workarounds to accomplish the same functionality,[69] clamored for it to be enabled on their projects.[70] Much of its functionality was eventually integrated into the ParserFunctions extension,[71] albeit disabled by default and accompanied by a warning from Tim Starling that enabling string functions would allow users "to implement their own parsers in the ugliest, most inefficient programming language known to man: MediaWiki wikitext with ParserFunctions."[72]

Since 2012 an extension, Scribunto, has existed that allows for the creation of "modules"—wiki pages written in the scripting language Lua—which can then be run within templates and standard wiki pages. Scribunto has been installed on Wikipedia and other Wikimedia sites since 2013 and is used heavily on those sites. Scribunto code runs significantly faster than corresponding wikitext code using ParserFunctions.[73]

Another very popular extension is a citation extension that enables footnotes to be added to pages using inline references.[74] This extension has, however, been criticized for being difficult to use and requiring the user to memorize complex syntax. A gadget called RefToolbar attempts to make it easier to create citations using common templates. MediaWiki has some extensions that are well-suited for academia, such as mathematics extensions[75] and an extension that allows molecules to be rendered in 3D.[76]

A generic Widgets extension exists that allows MediaWiki to integrate with virtually anything. Other examples of extensions that could improve a wiki are category suggestion extensions[77] and extensions for inclusion of Flash Videos,[78] YouTube videos,[79] and RSS feeds.[80] Metavid, a site that archives video footage of the U.S. Senate and House floor proceedings, was created using code extending MediaWiki into the domain of collaborative video authoring.[81]

There are many spambots that search the web for MediaWiki installations and add linkspam to them, despite the fact that MediaWiki uses the nofollow attribute to discourage such attempts at search engine optimization.[82] Part of the problem is that third party republishers, such as mirrors, may not independently implement the nofollow tag on their websites, so marketers can still get PageRank benefit by inserting links into pages when those entries appear on third party websites.[83] Anti-spam extensions have been developed to combat the problem by introducing CAPTCHAs,[84] blacklisting certain URLs,[85] and allowing bulk deletion of pages recently added by a particular user.[86]

MediaWiki comes pre-installed with a standard text-based search. Extensions exist to let MediaWiki use more sophisticated third-party search engines, including Elasticsearch (which since 2014 has been in use on Wikipedia), Lucene[87] and Sphinx.[88]

Various MediaWiki extensions have also been created to allow for more complex, faceted search, on both data entered within the wiki and on metadata such as pages' revision history.[89][90] Semantic MediaWiki is one such extension.[91][92]

Various extensions to MediaWiki support rich content generated through specialized syntax. These include mathematical formulas using LaTeX, graphical timelines over mathematical plotting, musical scores and Egyptian hieroglyphs.

The software supports a wide variety of uploaded media files, and allows image galleries and thumbnails to be generated with relative ease. There is also support for Exif metadata. MediaWiki operates the Wikimedia Commons, one of the largest free content media archives.

For WYSIWYG editing, VisualEditor is available to use in MediaWiki which simplifying editing process for editors and has been bundled since MediaWiki 1.35.[93] Other extensions exist for handling WYSIWYG editing to different degrees.[94]

MediaWiki can use either the MySQL/MariaDB, PostgreSQL or SQLite relational database management system. Support for Oracle Database and Microsoft SQL Server has been dropped since MediaWiki 1.34.[95] A MediaWiki database contains several dozen tables, including a page table that contains page titles, page ids, and other metadata;[96] and a revision table to which is added a new row every time an edit is made, containing the page id, a brief textual summary of the change performed, the user name of the article editor (or its IP address the case of an unregistered user) and a timestamp.[97][98]

In a 4½ year period prior to 2008, the MediaWiki database had 170 schema versions.[99] Possibly the largest schema change was done in 2005 with MediaWiki 1.5, when the storage of metadata was separated from that of content, to improve performance flexibility. When this upgrade was applied to Wikipedia, the site was locked for editing, and the schema was converted to the new version in about 22 hours. Some software enhancement proposals, such as a proposal to allow sections of articles to be watched via watchlist, have been rejected because the necessary schema changes would have required excessive Wikipedia downtime.[100]

Because it is used to run one of the highest-traffic sites on the Web, Wikipedia, MediaWiki's performance and scalability have been highly optimized.[101] MediaWiki supports Squid, load-balanced database replication, client-side caching, memcached or table-based caching for frequently accessed processing of query results, a simple static file cache, feature-reduced operation, revision compression, and a job queue for database operations. MediaWiki developers have attempted to optimize the software by avoiding expensive algorithms, database queries, etc., caching every result that is expensive and has temporal locality of reference, and focusing on the hot spots in the code through profiling.[102]

MediaWiki code is designed to allow for data to be written to a read-write database and read from read-only databases, although the read-write database can be used for some read operations if the read-only databases are not yet up to date. Metadata, such as article revision history, article relations (links, categories etc.), user accounts and settings can be stored in core databases and cached; the actual revision text, being more rarely used, can be stored as append-only blobs in external storage. The software is suitable for the operation of large-scale wiki farms such as Wikimedia, which had about 800 wikis as of August 2011. However, MediaWiki comes with no built-in GUI to manage such installations.

Empirical evidence shows most revisions in MediaWiki databases tend to differ only slightly from previous revisions. Therefore, subsequent revisions of an article can be concatenated and then compressed, achieving very high data compression ratios of up to 100×.[102]

For more information on the architecture, such as how it stores wikitext and assembles a page, see External links.

The parser serves as the de facto standard for the MediaWiki syntax, as no formal syntax has been defined. Due to this lack of a formal definition, it has been difficult to create WYSIWYG editors for MediaWiki, although several WYSIWYG extensions do exist, including the popular VisualEditor.

MediaWiki is not designed to be a suitable replacement for dedicated online forum or blogging software,[103] although extensions do exist to allow for both of these.[104][105]

It is common for new MediaWiki users to make certain mistakes, such as forgetting to sign posts with four tildes (~~~~),[106] or manually entering a plaintext signature,[107] due to unfamiliarity with the idiosyncratic particulars involved in communication on MediaWiki discussion pages. On the other hand, the format of these discussion pages has been cited as a strength by one educator, who stated that it provides more fine-grain capabilities for discussion than traditional threaded discussion forums. For example, instead of 'replying' to an entire message, the participant in a discussion can create a hyperlink to a new wiki page on any word from the original page. Discussions are easier to follow since the content is available via hyperlinked wiki page, rather than a series of reply messages on a traditional threaded discussion forum. However, except in few cases, students were not using this capability, possibly because of their familiarity with the traditional linear discussion style and a lack of guidance on how to make the content more 'link-rich'.[108]

MediaWiki by default has little support for the creation of dynamically assembled documents, or pages that aggregate data from other pages. Some research has been done on enabling such features directly within MediaWiki.[109] The Semantic MediaWiki extension provides these features. It is not in use on Wikipedia, but in more than 1,600 other MediaWiki installations.[110] The Wikibase Repository and Wikibase Repository client are however implemented in Wikidata and Wikipedia respectively, and to some extent provides semantic web features, and linking of centrally stored data to infoboxes in various Wikipedia articles.

Upgrading MediaWiki is usually fully automated, requiring no changes to the site content or template programming. Historically troubles have been encountered when upgrading from significantly older versions.[111]

MediaWiki developers have enacted security standards, both for core code and extensions.[112] SQL queries and HTML output are usually done through wrapper functions that handle validation, escaping, filtering for prevention of cross-site scripting and SQL injection.[113] Many security issues have had to be patched after a MediaWiki version release,[114] and accordingly MediaWiki.org states, "The most important security step you can take is to keep your software up to date" by subscribing to the announcement mailing list and installing security updates that are announced.[115]

Support for MediaWiki users consists of:

MediaWiki is free and open-source and is distributed under the terms of the GNU General Public License version 2 or any later version. Its documentation, located at its official website at www.mediawiki.org, is released under the Creative Commons BY-SA 4.0 license, with a set of help pages intended to be freely copied into fresh wiki installations and/or distributed with MediaWiki software in the public domain instead to eliminate legal issues for wikis with other licenses.[119][120] MediaWiki's development has generally favored the use of open-source media formats.[121]

MediaWiki has an active volunteer community for development and maintenance. MediaWiki developers are spread around the world, though with a majority in the United States and Europe. Face-to-face meetings and programming sessions for MediaWiki developers have been held once or several times a year since 2004.[122]

Anyone can submit patches to the project's Git/Gerrit repository.[123] There are also paid programmers who primarily develop projects for the Wikimedia Foundation. MediaWiki developers participate in the Google Summer of Code by facilitating the assignment of mentors to students wishing to work on MediaWiki core and extension projects.[124] During the year prior to November 2012, there were about two hundred developers who had committed changes to the MediaWiki core or extensions.[125] Major MediaWiki releases are generated approximately every six months by taking snapshots of the development branch, which is kept continuously in a runnable state;[126] minor releases, or point releases, are issued as needed to correct bugs (especially security problems). MediaWiki is developed on a continuous integration development model, in which software changes are pushed live to Wikimedia sites on regular basis.[126] MediaWiki also has a public bug tracker, phabricator.wikimedia.org, which runs Phabricator. The site is also used for feature and enhancement requests.

When Wikipedia was launched in January 2001, it ran on an existing wiki software system, UseModWiki. UseModWiki is written in the Perl programming language, and stores all wiki pages in text (.txt) files. This software soon proved to be limiting, in both functionality and performance. In mid-2001, Magnus Manske—a developer and student at the University of Cologne, as well as a Wikipedia editor—began working on new software that would replace UseModWiki, specifically designed for use by Wikipedia. This software was written in the PHP scripting language, and stored all of its information in a MySQL database. The new software was largely developed by August 24, 2001, and a test wiki for it was established shortly thereafter.

The first full implementation of this software was the new Meta Wikipedia on November 9, 2001. There was a desire to have it implemented immediately on the English-language Wikipedia.[127] However, Manske was apprehensive about any potential bugs harming the nascent website during the period of the final exams he had to complete immediately prior to Christmas;[128] this led to the launch on the English-language Wikipedia being delayed until January 25, 2002. The software was then, gradually, deployed on all the Wikipedia language sites of that time. This software was referred to as "the PHP script" and as "phase II", with the name "phase I", retroactively given to the use of UseModWiki.

Increasing usage soon caused load problems to arise again, and soon after, another rewrite of the software began; this time being done by Lee Daniel Crocker, which became known as "phase III". This new software was also written in PHP, with a MySQL backend, and kept the basic interface of the phase II software, but with the added functionality of a wider scalability. The "phase III" software went live on Wikipedia in July 2002.

The Wikimedia Foundation was announced on June 20, 2003. In July, Wikipedia contributor Daniel Mayer suggested the name "MediaWiki" for the software, as a play on "Wikimedia".[129] The MediaWiki name was gradually phased in, beginning in August 2003. The name has frequently caused confusion due to its (intentional) similarity to the "Wikimedia" name (which itself is similar to "Wikipedia").[130] The first version of MediaWiki, 1.1, was released in December 2003.

The old product logo was created by Erik Möller, using a flower photograph taken by Florence Nibart-Devouard, and was originally submitted to the logo contest for a new Wikipedia logo, held from July 20 to August 27, 2003.[131][132] The logo came in third place, and was chosen to represent MediaWiki rather than Wikipedia, with the second place logo being used for the Wikimedia Foundation.[133] The double square brackets ([[ ]]) symbolize the syntax MediaWiki uses for creating hyperlinks to other wiki pages; while the sunflower represents the diversity of content on Wikipedia, its constant growth, and the wilderness.[134]

Later, Brooke Vibber, the chief technical officer of the Wikimedia Foundation,[135] took up the role of release manager.[136][101]

Major milestones in MediaWiki's development have included: the categorization system (2004); parser functions, (2006); Flagged Revisions, (2008);[68] the "ResourceLoader", a delivery system for CSS and JavaScript (2011);[137] and the VisualEditor, a "what you see is what you get" (WYSIWYG) editing platform (2013).[138]

The contest of designing a new logo was initiated on June 22, 2020, as the old logo was a bitmap image and had "high details", leading to problems when rendering at high and low resolutions, respectively. After two rounds of voting, the new and current MediaWiki logo designed by Serhio Magpie was selected on October 24, 2020, and officially adopted on April 1, 2021.[139]

MediaWiki's most famous use has been in Wikipedia and, to a lesser degree, the Wikimedia Foundation's other projects. Fandom, a wiki hosting service formerly known as Wikia, runs on MediaWiki. Other public wikis that run on MediaWiki include wikiHow and SNPedia. WikiLeaks began as a MediaWiki-based site, but is no longer a wiki.

A number of alternative wiki encyclopedias to Wikipedia run on MediaWiki, including Citizendium, Metapedia, Scholarpedia and Conservapedia. MediaWiki is also used internally by a large number of companies, including Novell and Intel.[140][141]

Notable usages of MediaWiki within governments include Intellipedia, used by the United States Intelligence Community, Diplopedia, used by the United States Department of State, and milWiki, a part of milSuite used by the United States Department of Defense. United Nations agencies such as the United Nations Development Programme and INSTRAW chose to implement their wikis using MediaWiki, because "this software runs Wikipedia and is therefore guaranteed to be thoroughly tested, will continue to be developed well into the future, and future technicians on these wikis will be more likely to have exposure to MediaWiki than any other wiki software."[142]

The Free Software Foundation uses MediaWiki to implement the LibrePlanet site.[143]

Users of online collaboration software are familiar with MediaWiki's functions and layout due to its noted use on Wikipedia. A 2006 overview of social software in academia observed that "Compared to other wikis, MediaWiki is also fairly aesthetically pleasing, though simple, and has an easily customized side menu and stylesheet."[144] However, in one assessment in 2006, Confluence was deemed to be a superior product due to its very usable API and ability to better support multiple wikis.[76]

A 2009 study at the University of Hong Kong compared TWiki to MediaWiki. The authors noted that TWiki has been considered as a collaborative tool for the development of educational papers and technical projects, whereas MediaWiki's most noted use is on Wikipedia. Although both platforms allow discussion and tracking of progress, TWiki has a "Report" part that MediaWiki lacks. Students perceived MediaWiki as being easier to use and more enjoyable than TWiki. When asked whether they recommended using MediaWiki for knowledge management course group project, 15 out of 16 respondents expressed their preference for MediaWiki giving answers of great certainty, such as "of course", "for sure".[145] TWiki and MediaWiki both have flexible plug-in architecture.[146]

A 2009 study that compared students' experience with MediaWiki to that with Google Docs found that students gave the latter a much higher rating on user-friendly layout.[147]

A 2021 study conducted by the Brazilian Nuclear Engineering Institute compared a MediaWiki-based knowledge management system against two others that were based on DSpace and Open Journal Systems, respectively.[148] It highlighted ease of use as an advantage of the MediaWiki-based system, noting that because the Wikimedia Foundation had been developing MediaWiki for a site aimed at the general public (Wikipedia), "its user interface was designed to be more user-friendly from start, and has received large user feedback over a long time", in contrast to DSpace's and OJS's focus on niche audiences.[148]

488 languages (not including languages that are supported but have no translations)

|

|

This article needs to be updated. (December 2024)

|

|

|

This article is written like a personal reflection, personal essay, or argumentative essay that states a Wikipedia editor's personal feelings or presents an original argument about a topic. (January 2025)

|

|

|

This article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these messages)

|

| Part of a series on |

| Internet marketing |

|---|

| Search engine marketing |

| Display advertising |

| Affiliate marketing |

| Mobile advertising |

Search engine optimization (SEO) is the process of improving the quality and quantity of website traffic to a website or a web page from search engines.[1][2] SEO targets unpaid search traffic (usually referred to as "organic" results) rather than direct traffic, referral traffic, social media traffic, or paid traffic.

Unpaid search engine traffic may originate from a variety of kinds of searches, including image search, video search, academic search,[3] news search, and industry-specific vertical search engines.

As an Internet marketing strategy, SEO considers how search engines work, the computer-programmed algorithms that dictate search engine results, what people search for, the actual search queries or keywords typed into search engines, and which search engines are preferred by a target audience. SEO is performed because a website will receive more visitors from a search engine when websites rank higher within a search engine results page (SERP), with the aim of either converting the visitors or building brand awareness.[4]

Webmasters and content providers began optimizing websites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, webmasters submitted the address of a page, or URL to the various search engines, which would send a web crawler to crawl that page, extract links to other pages from it, and return information found on the page to be indexed.[5]

According to a 2004 article by former industry analyst and current Google employee Danny Sullivan, the phrase "search engine optimization" probably came into use in 1997. Sullivan credits SEO practitioner Bruce Clay as one of the first people to popularize the term.[6]

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag or index files in engines like ALIWEB. Meta tags provide a guide to each page's content. Using metadata to index pages was found to be less than reliable, however, because the webmaster's choice of keywords in the meta tag could potentially be an inaccurate representation of the site's actual content. Flawed data in meta tags, such as those that were inaccurate or incomplete, created the potential for pages to be mischaracterized in irrelevant searches.[7][dubious – discuss] Web content providers also manipulated attributes within the HTML source of a page in an attempt to rank well in search engines.[8] By 1997, search engine designers recognized that webmasters were making efforts to rank in search engines and that some webmasters were manipulating their rankings in search results by stuffing pages with excessive or irrelevant keywords. Early search engines, such as Altavista and Infoseek, adjusted their algorithms to prevent webmasters from manipulating rankings.[9]

By heavily relying on factors such as keyword density, which were exclusively within a webmaster's control, early search engines suffered from abuse and ranking manipulation. To provide better results to their users, search engines had to adapt to ensure their results pages showed the most relevant search results, rather than unrelated pages stuffed with numerous keywords by unscrupulous webmasters. This meant moving away from heavy reliance on term density to a more holistic process for scoring semantic signals.[10]

Search engines responded by developing more complex ranking algorithms, taking into account additional factors that were more difficult for webmasters to manipulate.[citation needed]

Some search engines have also reached out to the SEO industry and are frequent sponsors and guests at SEO conferences, webchats, and seminars. Major search engines provide information and guidelines to help with website optimization.[11][12] Google has a Sitemaps program to help webmasters learn if Google is having any problems indexing their website and also provides data on Google traffic to the website.[13] Bing Webmaster Tools provides a way for webmasters to submit a sitemap and web feeds, allows users to determine the "crawl rate", and track the web pages index status.

In 2015, it was reported that Google was developing and promoting mobile search as a key feature within future products. In response, many brands began to take a different approach to their Internet marketing strategies.[14]

In 1998, two graduate students at Stanford University, Larry Page and Sergey Brin, developed "Backrub", a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, PageRank, is a function of the quantity and strength of inbound links.[15] PageRank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher PageRank page is more likely to be reached by the random web surfer.

Page and Brin founded Google in 1998.[16] Google attracted a loyal following among the growing number of Internet users, who liked its simple design.[17] Off-page factors (such as PageRank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although PageRank was more difficult to game, webmasters had already developed link-building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaming PageRank. Many sites focus on exchanging, buying, and selling links, often on a massive scale. Some of these schemes involved the creation of thousands of sites for the sole purpose of link spamming.[18]

By 2004, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation.[19] The leading search engines, Google, Bing, and Yahoo, do not disclose the algorithms they use to rank pages. Some SEO practitioners have studied different approaches to search engine optimization and have shared their personal opinions.[20] Patents related to search engines can provide information to better understand search engines.[21] In 2005, Google began personalizing search results for each user. Depending on their history of previous searches, Google crafted results for logged in users.[22]

In 2007, Google announced a campaign against paid links that transfer PageRank.[23] On June 15, 2009, Google disclosed that they had taken measures to mitigate the effects of PageRank sculpting by use of the nofollow attribute on links. Matt Cutts, a well-known software engineer at Google, announced that Google Bot would no longer treat any no follow links, in the same way, to prevent SEO service providers from using nofollow for PageRank sculpting.[24] As a result of this change, the usage of nofollow led to evaporation of PageRank. In order to avoid the above, SEO engineers developed alternative techniques that replace nofollowed tags with obfuscated JavaScript and thus permit PageRank sculpting. Additionally, several solutions have been suggested that include the usage of iframes, Flash, and JavaScript.[25]

In December 2009, Google announced it would be using the web search history of all its users in order to populate search results.[26] On June 8, 2010 a new web indexing system called Google Caffeine was announced. Designed to allow users to find news results, forum posts, and other content much sooner after publishing than before, Google Caffeine was a change to the way Google updated its index in order to make things show up quicker on Google than before. According to Carrie Grimes, the software engineer who announced Caffeine for Google, "Caffeine provides 50 percent fresher results for web searches than our last index..."[27] Google Instant, real-time-search, was introduced in late 2010 in an attempt to make search results more timely and relevant. Historically site administrators have spent months or even years optimizing a website to increase search rankings. With the growth in popularity of social media sites and blogs, the leading engines made changes to their algorithms to allow fresh content to rank quickly within the search results.[28]

In February 2011, Google announced the Panda update, which penalizes websites containing content duplicated from other websites and sources. Historically websites have copied content from one another and benefited in search engine rankings by engaging in this practice. However, Google implemented a new system that punishes sites whose content is not unique.[29] The 2012 Google Penguin attempted to penalize websites that used manipulative techniques to improve their rankings on the search engine.[30] Although Google Penguin has been presented as an algorithm aimed at fighting web spam, it really focuses on spammy links[31] by gauging the quality of the sites the links are coming from. The 2013 Google Hummingbird update featured an algorithm change designed to improve Google's natural language processing and semantic understanding of web pages. Hummingbird's language processing system falls under the newly recognized term of "conversational search", where the system pays more attention to each word in the query in order to better match the pages to the meaning of the query rather than a few words.[32] With regards to the changes made to search engine optimization, for content publishers and writers, Hummingbird is intended to resolve issues by getting rid of irrelevant content and spam, allowing Google to produce high-quality content and rely on them to be 'trusted' authors.

In October 2019, Google announced they would start applying BERT models for English language search queries in the US. Bidirectional Encoder Representations from Transformers (BERT) was another attempt by Google to improve their natural language processing, but this time in order to better understand the search queries of their users.[33] In terms of search engine optimization, BERT intended to connect users more easily to relevant content and increase the quality of traffic coming to websites that are ranking in the Search Engine Results Page.

The leading search engines, such as Google, Bing, and Yahoo!, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine-indexed pages do not need to be submitted because they are found automatically. The Yahoo! Directory and DMOZ, two major directories which closed in 2014 and 2017 respectively, both required manual submission and human editorial review.[34] Google offers Google Search Console, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that are not discoverable by automatically following links[35] in addition to their URL submission console.[36] Yahoo! formerly operated a paid submission service that guaranteed to crawl for a cost per click;[37] however, this practice was discontinued in 2009.

Search engine crawlers may look at a number of different factors when crawling a site. Not every page is indexed by search engines. The distance of pages from the root directory of a site may also be a factor in whether or not pages get crawled.[38]

Mobile devices are used for the majority of Google searches.[39] In November 2016, Google announced a major change to the way they are crawling websites and started to make their index mobile-first, which means the mobile version of a given website becomes the starting point for what Google includes in their index.[40] In May 2019, Google updated the rendering engine of their crawler to be the latest version of Chromium (74 at the time of the announcement). Google indicated that they would regularly update the Chromium rendering engine to the latest version.[41] In December 2019, Google began updating the User-Agent string of their crawler to reflect the latest Chrome version used by their rendering service. The delay was to allow webmasters time to update their code that responded to particular bot User-Agent strings. Google ran evaluations and felt confident the impact would be minor.[42]

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txt file in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine's database by using a meta tag specific to robots (usually <meta name="robots" content="noindex"> ). When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish to crawl. Pages typically prevented from being crawled include login-specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.[43]

In 2020, Google sunsetted the standard (and open-sourced their code) and now treats it as a hint rather than a directive. To adequately ensure that pages are not indexed, a page-level robot's meta tag should be included.[44]

A variety of methods can increase the prominence of a webpage within the search results. Cross linking between pages of the same website to provide more links to important pages may improve its visibility. Page design makes users trust a site and want to stay once they find it. When people bounce off a site, it counts against the site and affects its credibility.[45]

Writing content that includes frequently searched keyword phrases so as to be relevant to a wide variety of search queries will tend to increase traffic. Updating content so as to keep search engines crawling back frequently can give additional weight to a site. Adding relevant keywords to a web page's metadata, including the title tag and meta description, will tend to improve the relevancy of a site's search listings, thus increasing traffic. URL canonicalization of web pages accessible via multiple URLs, using the canonical link element[46] or via 301 redirects can help make sure links to different versions of the URL all count towards the page's link popularity score. These are known as incoming links, which point to the URL and can count towards the page link's popularity score, impacting the credibility of a website.[45]

SEO techniques can be classified into two broad categories: techniques that search engine companies recommend as part of good design ("white hat"), and those techniques of which search engines do not approve ("black hat"). Search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods and the practitioners who employ them as either white hat SEO or black hat SEO.[47] White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.[48]

An SEO technique is considered a white hat if it conforms to the search engines' guidelines and involves no deception. As the search engine guidelines[11][12][49] are not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the online "spider" algorithms, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility,[50] although the two are not identical.

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines or involve deception. One black hat technique uses hidden text, either as text colored similar to the background, in an invisible div, or positioned off-screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known as cloaking. Another category sometimes used is grey hat SEO. This is in between the black hat and white hat approaches, where the methods employed avoid the site being penalized but do not act in producing the best content for users. Grey hat SEO is entirely focused on improving search engine rankings.

Search engines may penalize sites they discover using black or grey hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines' algorithms or by a manual site review. One example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for the use of deceptive practices.[51] Both companies subsequently apologized, fixed the offending pages, and were restored to Google's search engine results page.[52]

Companies that employ black hat techniques or other spammy tactics can get their client websites banned from the search results. In 2005, the Wall Street Journal reported on a company, Traffic Power, which allegedly used high-risk techniques and failed to disclose those risks to its clients.[53] Wired magazine reported that the same company sued blogger and SEO Aaron Wall for writing about the ban.[54] Google's Matt Cutts later confirmed that Google had banned Traffic Power and some of its clients.[55]

SEO is not an appropriate strategy for every website, and other Internet marketing strategies can be more effective, such as paid advertising through pay-per-click (PPC) campaigns, depending on the site operator's goals.[editorializing] Search engine marketing (SEM) is the practice of designing, running, and optimizing search engine ad campaigns. Its difference from SEO is most simply depicted as the difference between paid and unpaid priority ranking in search results. SEM focuses on prominence more so than relevance; website developers should regard SEM with the utmost importance with consideration to visibility as most navigate to the primary listings of their search.[56] A successful Internet marketing campaign may also depend upon building high-quality web pages to engage and persuade internet users, setting up analytics programs to enable site owners to measure results, and improving a site's conversion rate.[57][58] In November 2015, Google released a full 160-page version of its Search Quality Rating Guidelines to the public,[59] which revealed a shift in their focus towards "usefulness" and mobile local search. In recent years the mobile market has exploded, overtaking the use of desktops, as shown in by StatCounter in October 2016, where they analyzed 2.5 million websites and found that 51.3% of the pages were loaded by a mobile device.[60] Google has been one of the companies that are utilizing the popularity of mobile usage by encouraging websites to use their Google Search Console, the Mobile-Friendly Test, which allows companies to measure up their website to the search engine results and determine how user-friendly their websites are. The closer the keywords are together their ranking will improve based on key terms.[45]

SEO may generate an adequate return on investment. However, search engines are not paid for organic search traffic, their algorithms change, and there are no guarantees of continued referrals. Due to this lack of guarantee and uncertainty, a business that relies heavily on search engine traffic can suffer major losses if the search engines stop sending visitors.[61] Search engines can change their algorithms, impacting a website's search engine ranking, possibly resulting in a serious loss of traffic. According to Google's CEO, Eric Schmidt, in 2010, Google made over 500 algorithm changes – almost 1.5 per day.[62] It is considered a wise business practice for website operators to liberate themselves from dependence on search engine traffic.[63] In addition to accessibility in terms of web crawlers (addressed above), user web accessibility has become increasingly important for SEO.

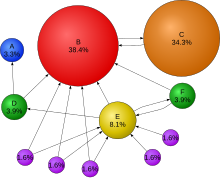

Optimization techniques are highly tuned to the dominant search engines in the target market. The search engines' market shares vary from market to market, as does competition. In 2003, Danny Sullivan stated that Google represented about 75% of all searches.[64] In markets outside the United States, Google's share is often larger, and data showed Google was the dominant search engine worldwide as of 2007.[65] As of 2006, Google had an 85–90% market share in Germany.[66] While there were hundreds of SEO firms in the US at that time, there were only about five in Germany.[66] As of March 2024, Google still had a significant market share of 89.85% in Germany.[67] As of June 2008, the market share of Google in the UK was close to 90% according to Hitwise.[68][obsolete source] As of March 2024, Google's market share in the UK was 93.61%.[69]

Successful search engine optimization (SEO) for international markets requires more than just translating web pages. It may also involve registering a domain name with a country-code top-level domain (ccTLD) or a relevant top-level domain (TLD) for the target market, choosing web hosting with a local IP address or server, and using a Content Delivery Network (CDN) to improve website speed and performance globally. It is also important to understand the local culture so that the content feels relevant to the audience. This includes conducting keyword research for each market, using hreflang tags to target the right languages, and building local backlinks. However, the core SEO principles—such as creating high-quality content, improving user experience, and building links—remain the same, regardless of language or region.[66]

Regional search engines have a strong presence in specific markets:

By the early 2000s, businesses recognized that the web and search engines could help them reach global audiences. As a result, the need for multilingual SEO emerged.[74] In the early years of international SEO development, simple translation was seen as sufficient. However, over time, it became clear that localization and transcreation—adapting content to local language, culture, and emotional resonance—were far more effective than basic translation.[75]

On October 17, 2002, SearchKing filed suit in the United States District Court, Western District of Oklahoma, against the search engine Google. SearchKing's claim was that Google's tactics to prevent spamdexing constituted a tortious interference with contractual relations. On May 27, 2003, the court granted Google's motion to dismiss the complaint because SearchKing "failed to state a claim upon which relief may be granted."[76][77]

In March 2006, KinderStart filed a lawsuit against Google over search engine rankings. KinderStart's website was removed from Google's index prior to the lawsuit, and the amount of traffic to the site dropped by 70%. On March 16, 2007, the United States District Court for the Northern District of California (San Jose Division) dismissed KinderStart's complaint without leave to amend and partially granted Google's motion for Rule 11 sanctions against KinderStart's attorney, requiring him to pay part of Google's legal expenses.[78][79]

A digital agency in Sydney can offer a comprehensive approach, combining SEO with other marketing strategies like social media, PPC, and content marketing. By integrating these services, they help you achieve a stronger online presence and better ROI.

An SEO consultant in Sydney can provide tailored advice and strategies that align with your business's goals and local market conditions. They bring expertise in keyword selection, content optimization, technical SEO, and performance monitoring, helping you achieve better search rankings and more organic traffic.

SEO, or search engine optimisation, is the practice of improving a website's visibility on search engines like Google. It involves optimizing various elements of a site such as keywords, content, meta tags, and technical structure to help it rank higher in search results.

Local SEO helps small businesses attract customers from their immediate area, which is crucial for brick-and-mortar stores and service providers. By optimizing local listings, using location-based keywords, and maintaining accurate NAP information, you increase visibility, build trust, and drive more foot traffic.

SEO marketing is the process of using search engine optimization techniques to enhance your online presence. By optimizing your website, creating relevant content, and building authority, you attract organic traffic from search engines, increase brand awareness, and drive conversions.